|

4/12/2023 0 Comments Swish activation function

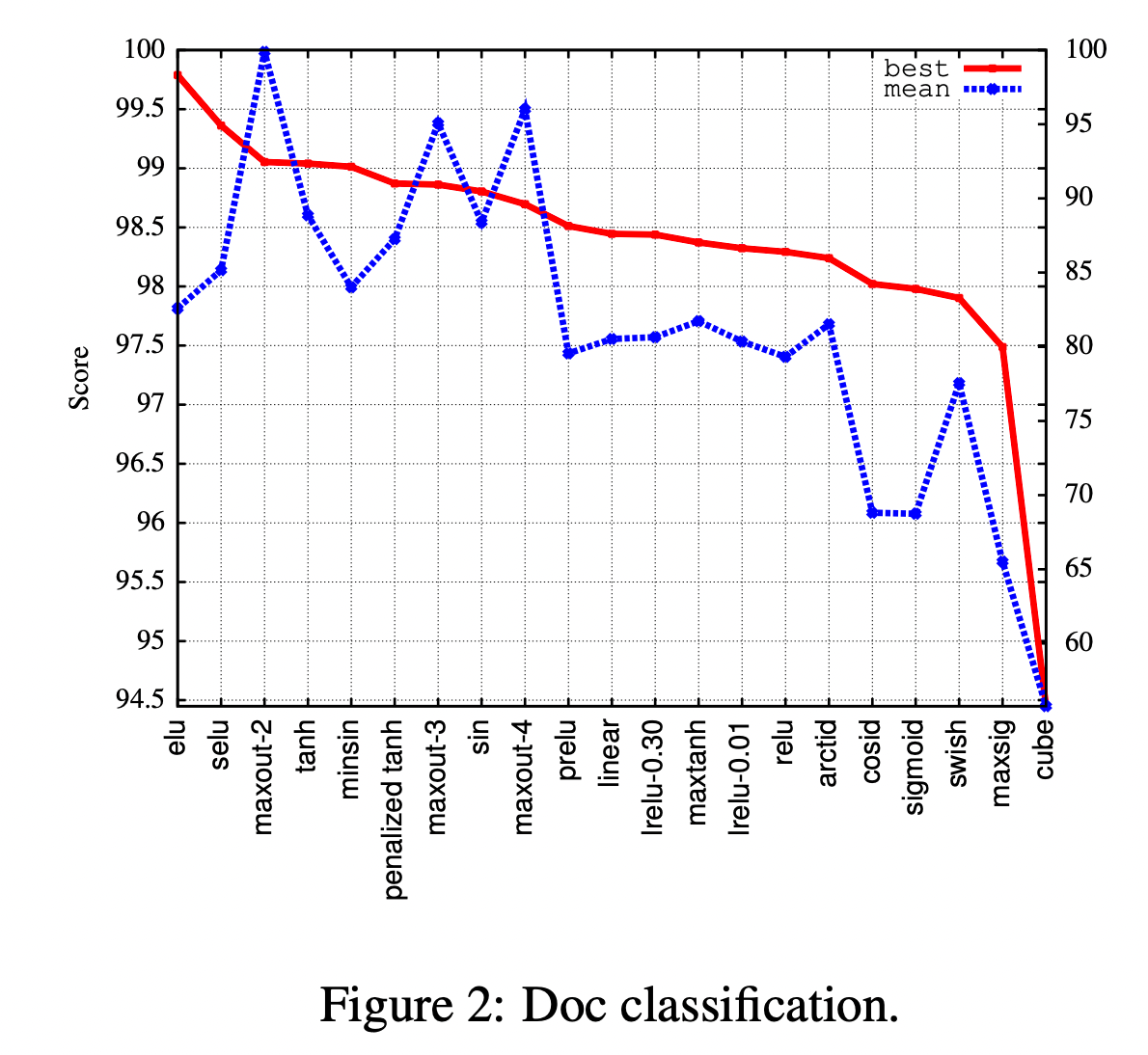

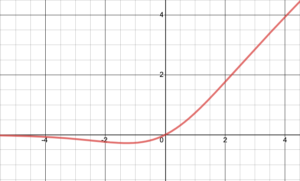

See this article ELU for the description of another activation function (Exponential Linear Unit - ELU). In this article, we will talk about the Swish activation function and how it works. Back propagation processes the derivatives of the activation functions and hence why learning is harder with dead/saturated neurones. It does this by calculating the differential of the error compared to the differential of the activation functions in each neurone on each layer to optimise their outputs. When it is a wrong prediction, an error is calculated and back propagation will find ways to correct the decisions made to arrive at the wrong prediction. If it is a right prediction the model learns it to be able to use when a similar situation arises. This is the process for the model to learn the steps taken to arrive at a prediction. Backward propagation is from the output layer to the input layer. It does this by processing information from layer to layer, and each layer has a set of neurones with activation functions, that give an output to the neurones in the subsequent layer. This is the process of the model doing what it was designed to do (ex. Forward propagation is from the input layer to the output layer. There is forward propagation and backward propagation. Propagation in AI is just the flow of sequences for making decisions and learning. Since I have introduced a term propagation, I think it is necessary to give a brief explanation for what it is. Saturation, and dead neurones make it hard for the models to learn during back propagation. This saturation means all outputs will be relatively equal making subsequent outputs not-so-useful. In some cases, you will have dead neurones when they are saturated, this is when there is little or no variation in the outputs based on the nature of the inputs and the activation function used. So, if the input itself is useless and the algorithm can’t make sense of the input, then the output is consequently useless as well. Activation functions basically determine the output of a function based on the input. Activation functions are the algorithms that the neurones use to sort out useful data from useless data. When the neural network is fed with a lot of data, it tries to separate useful from useless data much like how human brains work. The mechanism of neural networks works similarly to human brains. Pre-requisite: Types of Activation Functions used in Machine Learning Overview of Neural Networks and the use of Activation functions Overview of Neural Networks and the use of Activation functions.This was developed by Researchers at Google as an alternative to Rectified Linear Unit (ReLu). Produce activations instead of letting them be zero, when calculating the gradient.In this article, we have explored Swish Activation Function in depth.Produces negative outputs, which helps the network nudge weights and biases in the right directions.The plot for it looks like this: The ELU activation function differentiatedĪs you might have noticed, we avoid the dead relu problem here, while still keeping some of the computational speed gained by the ReLU activation function – that is, we will still have some dead components in the network. The output is the ELU function (not differentiated) plus the alpha value, if the input $x$ is less than zero. The y-value output is $1$ if $x$ is greater than 0. I show you the vanishing and exploding gradient problem for the latter, I follow Nielsens great example of why gradients might explode.Īt last, I provide some code that you can run for yourself, in a Jupyter Notebook.įrom the small code experiment on the MNIST dataset, we obtain a loss and accuracy graph for each activation function The goal is to explain the equation and graphs in simple input-output terms. I will give you the equation, differentiated equation and plots for both of them. In this extensive article (>6k words), I'm going to go over 6 different activation functions, each with pros and cons. Avoiding Exploding Gradients: Gradient Clipping/NormsĬode: Hyperparameter Search for Deep Neural NetworksĪctivation functions can be a make-or-break-it part of a neural network.Code > Theory? → Jump straight to the code.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed